GPT-4 Prompt Engineering: How to Get Started

Published on

You've heard of GPT-4, OpenAI's latest language model that's taken the tech world by storm. It's not just another upgrade; it's a game-changer in many ways. But how do you make the most of this cutting-edge technology? The answer lies in understanding the ins and outs of "prompt engineering."

Let's dive into this comprehensive guide that unfolds the mysterious world of GPT-4 prompt engineering. From understanding the basics of GPT-4 to mastering the art of fine-tuning its performance through advanced prompting techniques, this article covers it all.

Section 1: A Close Look at GPT-4

What is GPT-4's Capabilities?

GPT-4, or Generative Pre-trained Transformer 4, is a powerful language model developed by OpenAI. It has achieved human-level performance on various professional and academic benchmarks. In layman's terms, it's like having a super-smart assistant that can perform tasks ranging from writing code to answering complex questions.

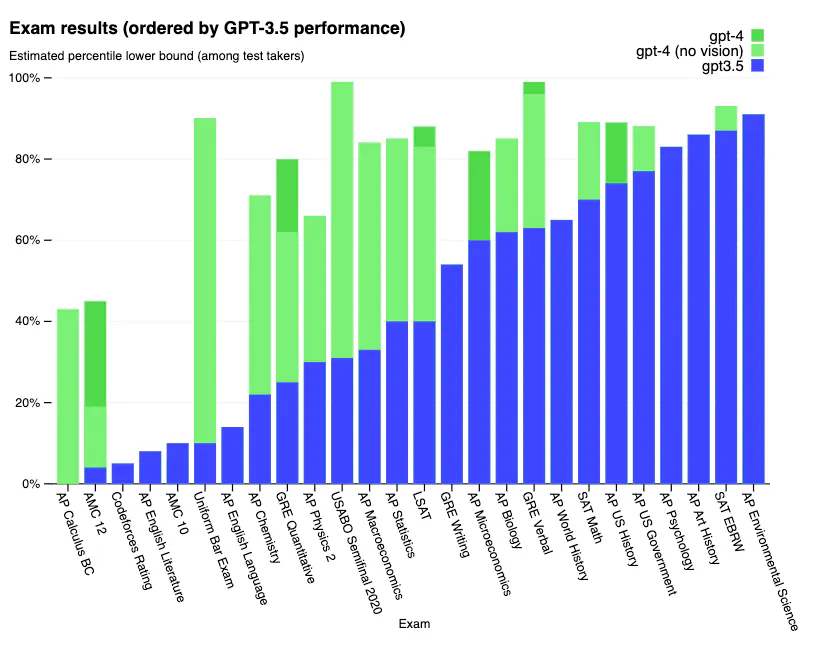

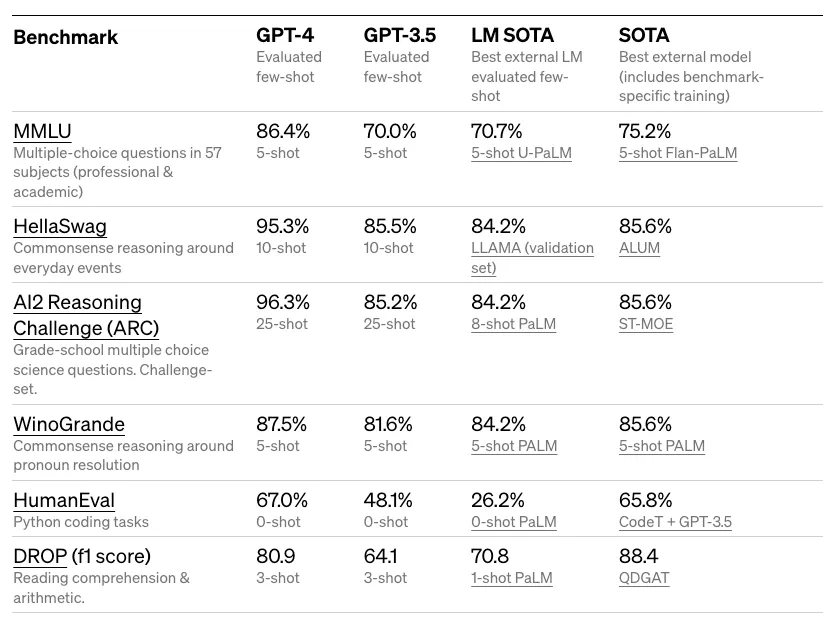

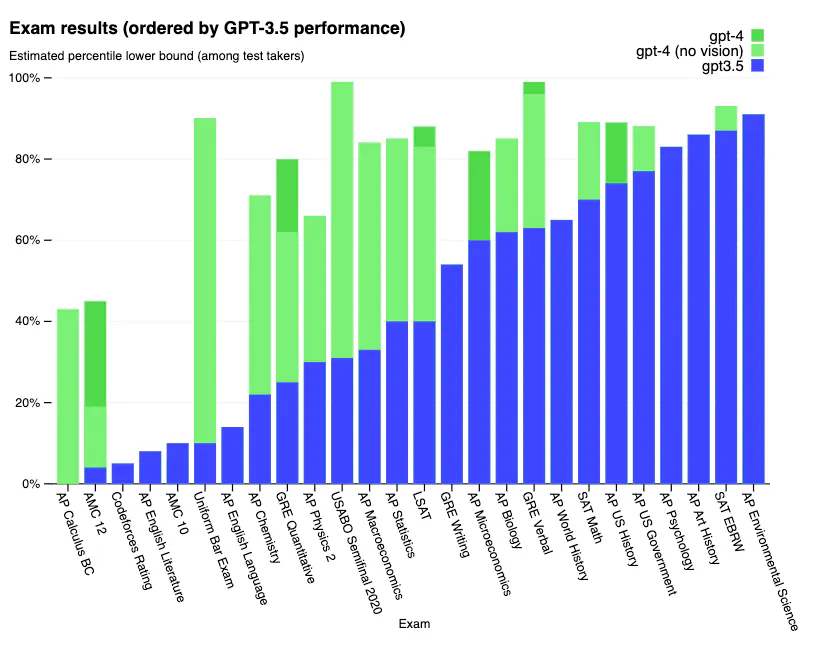

- Impressive Benchmark Scores: GPT-4 has scored in the top 10% of test-takers on simulated bar exams. It also shows remarkable performance on tough benchmarks like MMLU (Multi-Modal Language Understanding) and HellaSwag.

- Factuality and Steerability: OpenAI has incorporated lessons from their adversarial testing program and ChatGPT, making GPT-4 more accurate and better aligned with user inputs.

)

)

What's New in GPT-4?

Before diving into prompt engineering, it's crucial to understand what makes GPT-4 different from its predecessors.

- Adversarial Learning: OpenAI used adversarial testing to enhance the model's ability to generate more factual and reliable information.

- Limited Hallucination: While not entirely free from errors, GPT-4 tends to hallucinate less and makes fewer reasoning mistakes compared to earlier versions.

Vision Capabilities in GPT-4

Although GPT-4 is yet to offer public image input capabilities, it's designed to handle such future expansions. For now, it outshines GPT-3.5, especially in text-based tasks, by being more reliable and creative.

- Text-to-Image Augmentation: Even without direct image input, GPT-4 can work with image-related tasks using few-shot or chain-of-thought prompting techniques.

Example: You can prompt GPT-4 as follows to perform a step-by-step analysis on image-related information:

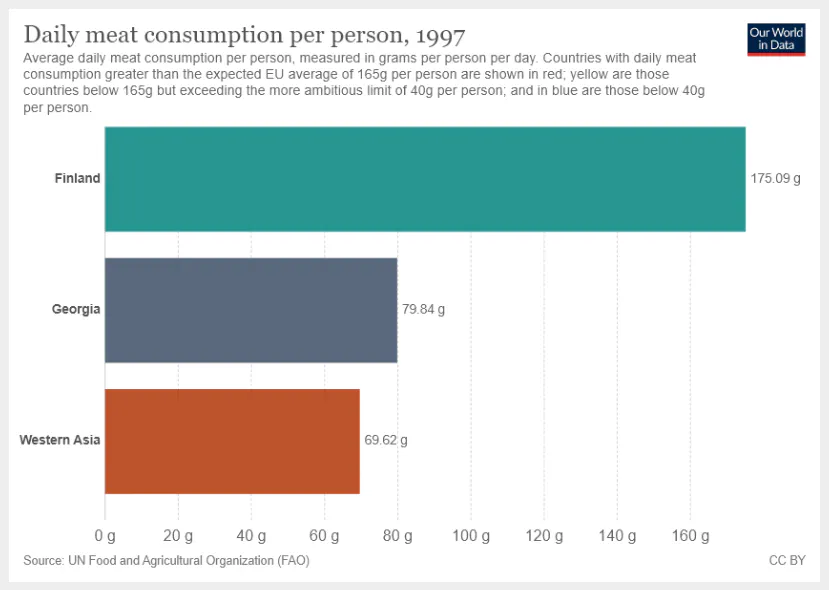

(prompt) "What is the sum of average daily meat consumption for Georgia and Western Asia? Provide a step-by-step reasoning before providing your answer."

GPT-4 would then provide a detailed, step-by-step calculation based on the image or chart you refer to.

That's just the tip of the iceberg. The real magic happens when you master GPT-4 prompt engineering, a skill we'll explore in the next sections.

Section 2: Mastering Prompt Engineering with GPT-4

Starting with Basic Prompts

The art of prompt engineering begins with understanding how to craft simple, effective prompts. You might be surprised to know that a small tweak in your prompt can lead to dramatically different results. For instance, the prompt "tell me a joke" might get you a generic joke, but what about asking, "tell me a joke about quantum physics"? The latter focuses the AI's knowledge base on a specific subject, leading to a more targeted response.

-

Iterative Refinement: Starting with a generic prompt and then making incremental adjustments helps in narrowing down to the exact output you desire.

-

Prompt Sensitivity: GPT-4 is sensitive to prompt phrasing, so even minor changes can produce more refined answers.

Example: Consider you want to get programming advice. Your initial prompt might be:

(prompt) "Give me some programming advice."

Refining this could lead to:

(prompt) "Give me some advanced Python programming advice for data analysis."

Steering GPT-4 for Specific Needs

System messages play a vital role in steering GPT-4 to produce outputs in specific formats. It's a feature that essentially gives you more control over the structure of the generated text, whether you want JSON, XML, or any other custom format.

-

System Messages: These are special messages that you include before the user's prompt to instruct the model on the desired format.

-

Data Sampling: Utilizing system messages allows for data sampling or text generation in a manner that's easier to integrate with other systems.

Example: To generate a list of tips in JSON format, you could use:

(prompt) "SYSTEM: You are an AI model and should output in JSON format." (prompt) "USER: Provide five tips for effective prompt engineering."

Exploring Advanced Prompt Engineering Techniques

When it comes to diving deeper into prompt engineering, techniques like in-context learning and chain-of-thought prompting take the stage.

-

In-Context Learning: GPT-4 can learn from the context within a conversation, allowing you to provide examples that guide its future responses.

-

Chain-of-Thought Prompting: This involves issuing multiple prompts in sequence, letting you guide the model through a complex line of reasoning or computation.

Example: To guide GPT-4 in solving a complex math problem, you could use:

(prompt) "Calculate the area under the curve of the function f(x) = x^2 from x=0 to x=2." (prompt) "To do this, we will use integration. The integral of f(x) = x^2 is F(x) = x^3/3. Calculate the area using this information."

By mastering these techniques, you're not just asking GPT-4 to perform tasks; you're steering it, almost like a co-pilot, towards the exact destination you have in mind.

Section 3: GPT-4's Strengths and Weaknesses

How Accurate is GPT-4?

Accuracy remains one of the most critical factors in assessing GPT-4's capabilities. Data from the TruthfulQA benchmark indicates that GPT-4 shows a 5% improvement in factuality over GPT-3.5. However, it's essential to note that prompt engineering can further hone this accuracy.

-

TruthfulQA Benchmark: According to this benchmark, GPT-4 has a noticeable edge over its predecessor in terms of factual accuracy.

-

Prompt Refinement: Properly engineered prompts can mitigate issues of incorrect or incomplete answers.

Example: To assess the model's accuracy, you could use a prompt like:

(prompt) "Provide factual information about the boiling point of water under various atmospheric pressures."

Making GPT-4 More Reliable

Reliability in GPT-4 can be improved through rigorous experimentation and creative use of various prompt engineering techniques.

-

Experimentation Strategies: This involves using a mix of few-shot, zero-shot, and chain-of-thought prompts to get an understanding of how each performs under various conditions.

-

Combining Techniques: Different techniques can be synergistically combined for even more accurate and reliable results.

Conclusion

The landscape of AI and language models has been dramatically reshaped with the advent of GPT-4. Through techniques like prompt engineering, we can now tailor GPT-4 to a variety of specialized tasks, making it not just a jack-of-all-trades, but a master of many. Whether it's acing professional benchmarks, steering the model for personalized outputs, or preparing for its future capabilities in vision, GPT-4 stands as a monumental achievement in the field of AI.

What is Prompt Engineering in GPT?

Prompt engineering is the process of crafting effective queries or statements to guide the output of a GPT model to meet specific needs or criteria.

What are the Prompting Techniques in GPT-4?

Prompting techniques in GPT-4 include basic prompting, system message steering, in-context learning, and chain-of-thought prompting.

How do I Prompt GPT-4?

To prompt GPT-4, you input a text query or statement and receive a text output. You can refine the prompt iteratively and use system messages for more specialized outputs.

How to Use ChatGPT for Engineering?

ChatGPT can be used for engineering tasks by utilizing specialized prompts and system messages to generate code snippets, data sets, and more.