Tree of Thoughts Prompting

Published on

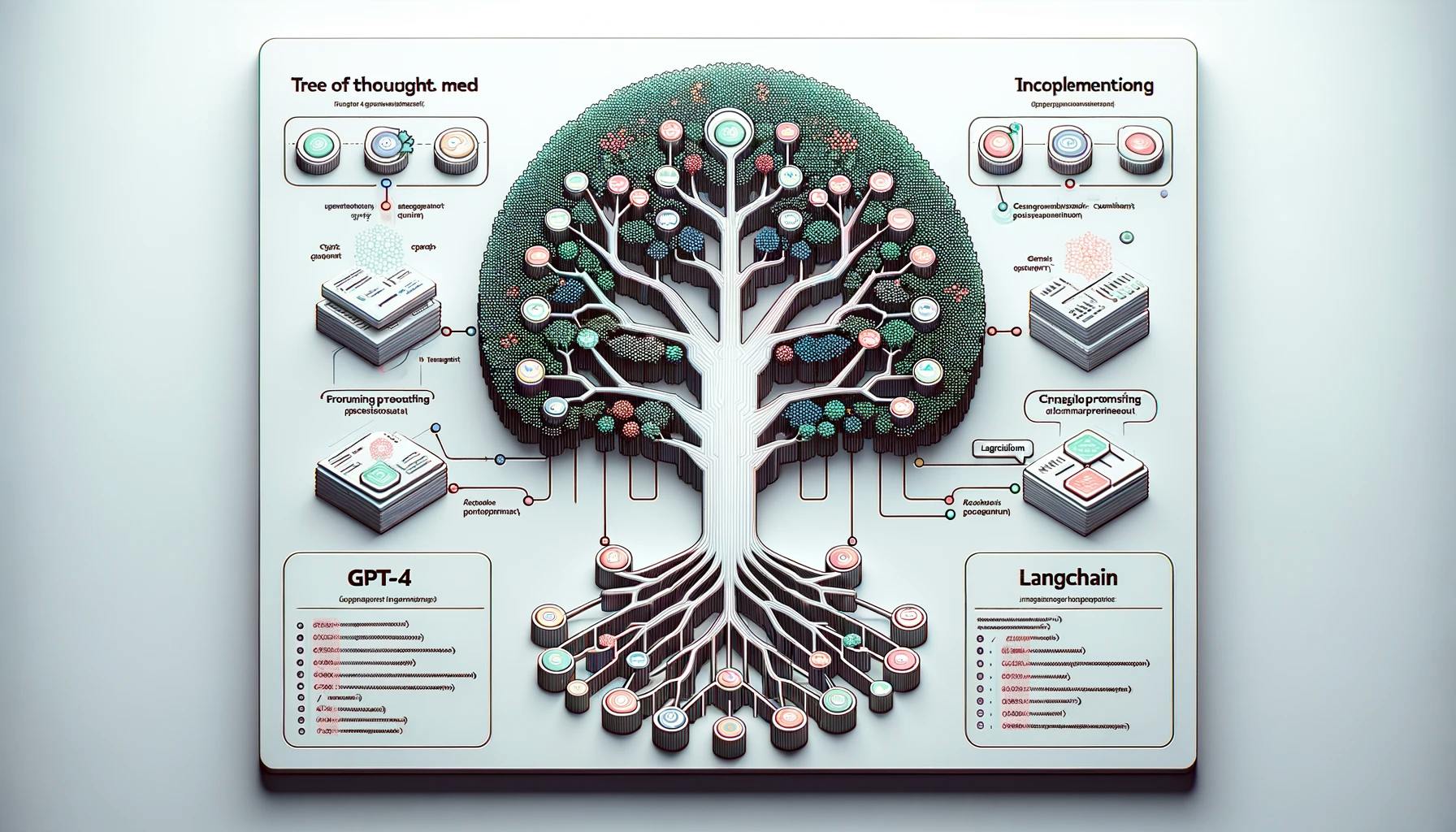

Welcome to the future of Prompt Engineering! If you've been grappling with the limitations of traditional prompting methods, you're in for a treat. The Tree of Thoughts Prompting technique is a groundbreaking approach that promises to redefine how we interact with Large Language Models (LLMs) like GPT-4.

In this comprehensive guide, we'll delve into the nuts and bolts of this innovative technique. From its hierarchical structure to its seamless integration with LLMs and its practical implementation in LangChain, we've got you covered. So, let's get started!

Fundamentals of Tree of Thoughts Prompting

What is Tree of Thoughts Prompting?

Tree of Thoughts Prompting, or ToT, is a specialized technique designed to generate more focused and relevant responses from LLMs. Unlike conventional methods that often yield linear and limited outcomes, ToT employs a hierarchical structure to guide the thought process. This results in a more dynamic and comprehensive set of responses, making it particularly useful for complex queries.

- Hierarchical Structure: The technique uses a tree-like structure where each node represents a thought or idea. This allows for branching into multiple directions, offering a wide array of solutions.

- Dynamic Evaluation: At each node, the LLM evaluates the effectiveness of the thought and decides whether to proceed or explore alternative branches.

- Focused Responses: By guiding the LLM through a structured thought process, ToT ensures that the generated responses are not just relevant but also contextually rich.

Why Tree of Thoughts Prompting is a Game-Changer?

The Tree of Thoughts Prompting technique is revolutionary for several reasons:

- Enhanced Problem-Solving: It allows for the exploration of multiple avenues before settling on the most promising one. This is crucial for complex problem-solving tasks.

- Optimized Resource Utilization: By evaluating the effectiveness of each thought at every node, it minimizes the computational resources required for generating responses.

- Seamless Integration with LLMs: ToT is compatible with advanced LLMs like GPT-4, making it a versatile tool in the field of Prompt Engineering.

How Does Hierarchical Framework Work for Tree of Thoughts Prompting?

Understanding the hierarchical framework of Tree of Thoughts Prompting is key to leveraging its full potential. Each 'tree' starts with a root thought, which then branches out into various nodes representing different lines of thought or solutions. These nodes can further branch out, creating a complex web of interconnected ideas.

- Root Thought: This is the initial idea or question that serves as the starting point of the tree. For example, if you're trying to solve a mathematical problem, the root thought could be the main equation.

- Branching Nodes: These are the various solutions or approaches that stem from the root thought. Each node is a potential path to explore.

- Leaf Nodes: These are the final thoughts or solutions that do not branch out any further. They represent the end-points of each line of thought.

By navigating through this hierarchical structure, you can explore multiple solutions simultaneously, evaluate their effectiveness, and choose the most promising one. This is particularly useful in scenarios where a single solution may not suffice, such as in complex engineering problems or multi-faceted business decisions.

How Do Large Language Models Enhance Tree of Thoughts?

How Tree of Thoughts Prompting Works with LLMs

The Tree of Thoughts Prompting technique isn't just a standalone marvel; it becomes even more potent when integrated with Large Language Models like GPT-4. These LLMs bring a wealth of data and computational power to the table, making the thought process not just structured but also incredibly informed.

-

Data-Driven Decisions: LLMs have been trained on vast datasets, allowing them to pull in relevant information when navigating through the tree of thoughts. This ensures that each node or thought is backed by data, enhancing the quality of the output.

-

Contextual Understanding: One of the strengths of LLMs is their ability to understand context. When integrated with Tree of Thoughts, this contextual understanding is applied at each node, making the thought process more nuanced and targeted.

-

Dynamic Adaptability: LLMs can adapt their responses based on the feedback received at each node. This dynamic nature ensures that the tree can pivot or adjust its course as needed, making the process highly flexible.

The Role of Heuristic Search in Tree of Thoughts Prompting

Heuristic search algorithms play a crucial role in this synergy. These algorithms guide the LLM through the tree, helping it evaluate the effectiveness of each thought or node. They apply a set of rules or heuristics to determine which branches are worth exploring further and which should be pruned.

-

Efficiency: Heuristic search speeds up the process by eliminating less promising branches early on, thereby saving computational resources.

-

Optimization: The algorithm continuously optimizes the path, ensuring that the LLM focuses on the most promising lines of thought.

-

Feedback Loop: The heuristic search creates a feedback loop with the LLM, allowing for real-time adjustments and refinements to the thought process.

By combining the computational prowess of LLMs with the structured approach of Tree of Thoughts, you get a system that is not just efficient but also incredibly intelligent. This makes it a formidable tool in the field of Prompt Engineering, particularly when dealing with complex queries or problems that require a multi-faceted approach.

How to Use Tree of Thoughts Prompting with LangChain

How Does LangChain Utilize Tree of Thoughts?

LangChain, a cutting-edge platform in the realm of language models, has successfully incorporated the Tree of Thoughts technique into its architecture. This implementation serves as a real-world example of how the technique can be applied effectively.

-

Wide Range of Ideas: LangChain uses Tree of Thoughts to generate a plethora of ideas or solutions for a given problem. This ensures that the platform explores multiple avenues before settling on the most promising one.

-

Self-Evaluation: One of the standout features of LangChain's implementation is the ability of the system to evaluate itself at each stage. This self-evaluation is crucial for optimizing the thought process and ensuring that the final output is of the highest quality.

-

Switching Mechanism: LangChain has integrated a switching mechanism that allows the system to pivot to alternative methods if the current line of thought proves to be less effective. This adds an extra layer of flexibility and adaptability to the process.

LangChain's successful implementation of Tree of Thoughts serves as a testament to the technique's efficacy and versatility. It showcases how the technique can be applied in real-world scenarios, providing valuable insights into its practical utility.

What Does a Tree of Thoughts Implementation Look Like in LangChain?

Implementing the Tree of Thoughts in LangChain involves a series of steps that leverage both the hierarchical structure of the technique and the computational power of Large Language Models. Below are some sample code snippets that demonstrate how to go about this implementation.

Step 1. Initialize the Root Thought

First, you'll need to initialize the root thought or the starting point of your tree. This could be a query, a problem statement, or an idea you want to explore.

# Initialize the root thought

root_thought = "How to improve user engagement on a website?"Step 2. Create Branching Nodes

Next, you'll create branching nodes that represent different lines of thought or solutions stemming from the root thought.

# Create branching nodes

branching_nodes = ["Improve UI/UX", "Implement gamification", "Personalize content"]Step 3. Implement Heuristic Search

To navigate through the tree efficiently, you'll implement a heuristic search algorithm. This will guide the LLM in evaluating the effectiveness of each thought or node.

# Implement heuristic search

def heuristic_search(node):

# Your heuristic logic here

return evaluated_valueStep 4. Navigate and Evaluate

Finally, you'll navigate through the tree, evaluating each node using the heuristic search and the LLM.

# Navigate and evaluate

for node in branching_nodes:

value = heuristic_search(node)

if value > threshold:

# Explore this node further-

Initialization: The root thought serves as the starting point, and branching nodes represent different lines of thought.

-

Heuristic Search: This algorithm evaluates the effectiveness of each thought, guiding the LLM through the tree.

-

Navigation and Evaluation: The final step involves navigating through the tree and evaluating each node to decide which branches to explore further.

By following these detailed steps, you can implement the Tree of Thoughts technique in LangChain or any other platform that utilizes Large Language Models. The sample codes provide a practical guide, making the implementation process straightforward and efficient.

Conclusion

The Tree of Thoughts Prompting technique is a revolutionary approach that has the potential to redefine the landscape of Prompt Engineering. Its hierarchical structure, coupled with the computational power of Large Language Models and the efficiency of heuristic search algorithms, makes it a versatile and effective tool for generating focused and relevant responses. LangChain's successful implementation serves as a real-world testament to its practical utility and effectiveness.

In this comprehensive guide, we've covered everything from the fundamentals of the technique to its practical implementation in LangChain, complete with sample codes. Whether you're a seasoned expert or a curious beginner, understanding and implementing the Tree of Thoughts can give you a significant edge in the ever-evolving field of Prompt Engineering.

FAQs

What is a tree of thought?

A tree of thought is a hierarchical structure used in the Tree of Thoughts Prompting technique to guide the thought process of Large Language Models. It starts with a root thought and branches out into various nodes representing different lines of thought or solutions.

What is the tree of thought prompting method?

The Tree of Thoughts Prompting method is a specialized technique designed to generate more focused and relevant responses from Large Language Models. It employs a hierarchical structure and integrates with heuristic search algorithms to guide the thought process.

How do you implement a tree of thoughts in LangChain?

Implementing a tree of thoughts in LangChain involves initializing a root thought, creating branching nodes, implementing a heuristic search algorithm, and navigating through the tree to evaluate each node. The process is guided by the Large Language Model and can be implemented using the sample codes provided in this guide.