QA-LoRA: A Guide to Fine-Tuning Large Language Models Efficiently

Published on

Welcome to the fascinating universe of Large Language Models (LLMs)! These computational giants are the backbone of numerous applications, from chatbots and translators to content generators and recommendation systems. However, as marvelous as they are, deploying them is no walk in the park. The computational and memory requirements can be staggering, often requiring specialized hardware and a lot of patience.

That's where QA-LoRA comes into play. This groundbreaking method is changing the game, making it easier and more efficient to fine-tune and deploy LLMs. So, if you're struggling with the computational burden of LLMs or looking for a smarter way to fine-tune them, you've come to the right place.

Why QA-LoRA is a Game-Changer for LLMs

Want to learn the latest LLM News? Check out the latest LLM leaderboard!

What Exactly is QA-LoRA and How Does It Differ from LoRA?

Before diving into the nitty-gritty, let's define our topic. QA-LoRA stands for Quantization-Aware Low-Rank Adaptation. In simpler terms, it's a method designed to make the fine-tuning of Large Language Models more efficient. Now, you might be wondering, "What's LoRA then?" LoRA is the acronym for Low-Rank Adaptation, a technique that aims to reduce the complexity of a model while retaining its performance. What sets QA-LoRA apart is its quantization-aware aspect.

- Quantization: This is the process of constraining the possible values that a function can take. In the context of LLMs, it helps in reducing the size of the model.

- Low-Rank Adaptation: This involves approximating the original high-dimensional data with a lower-dimensional form, making the model computationally less expensive.

So, when you combine these two—quantization and low-rank adaptation—you get QA-LoRA, a method that not only reduces the model's size but also makes it computationally efficient. This is crucial for deploying LLMs on devices with limited computational resources.

The LoRA Method Simplified

The LoRA method is essentially a way to approximate the original weight matrices of an LLM using low-rank matrices. This is a smart trick to reduce the computational requirements without sacrificing much in terms of performance. In the context of QA-LoRA, this low-rank adaptation works hand-in-hand with quantization to provide an even more efficient model.

- Step 1: Start by identifying the weight matrices in your LLM that are suitable for low-rank approximation.

- Step 2: Use mathematical techniques like Singular Value Decomposition (SVD) to find these low-rank approximations.

- Step 3: Replace the original weight matrices with these low-rank approximations.

- Step 4: Apply quantization to further reduce the model's size.

By following these steps, you can significantly reduce the computational burden of your LLM, making it easier and faster to deploy.

Balancing Quantization and Adaptation in QA-LoRA

One of the most intriguing aspects of QA-LoRA is how it balances the degrees of freedom between quantization and adaptation. This balance is crucial because it allows QA-LoRA to be both efficient and accurate. Too much quantization can lead to a loss of accuracy, while too much adaptation can make the model computationally expensive. QA-LoRA finds the sweet spot between these two.

- Efficiency: By using quantization, QA-LoRA reduces the model's size, making it faster to load and run.

- Accuracy: Through low-rank adaptation, it retains the model's performance, ensuring that you don't have to compromise on quality.

As we can see, QA-LoRA offers a balanced approach that makes it a go-to method for anyone looking to fine-tune and deploy Large Language Models efficiently. It combines the best of both worlds—efficiency and accuracy—making it a game-changer in the field of machine learning.

How to Get Started with QA-LoRA

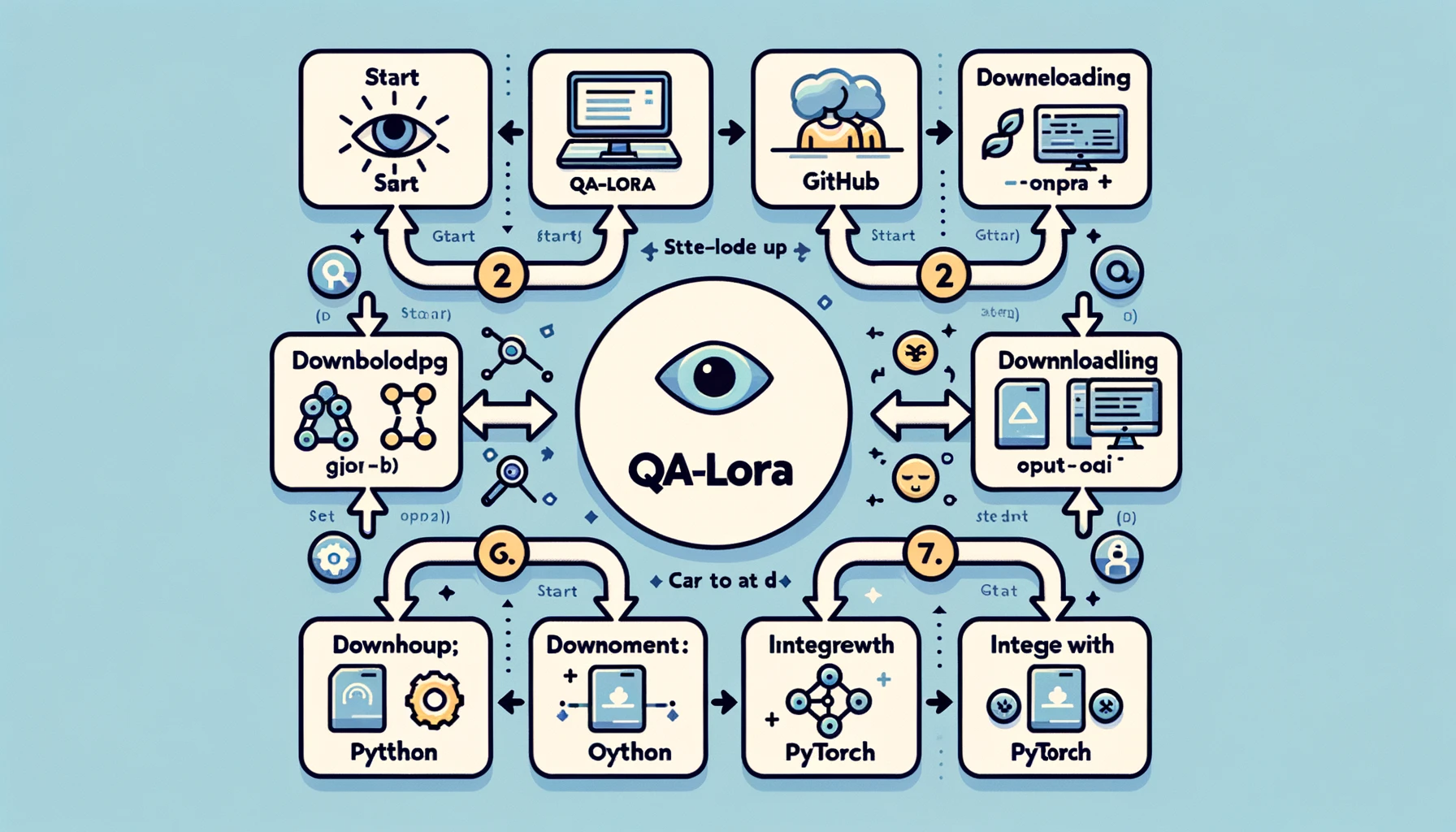

Setting Up Your Environment for QA-LoRA

Before you can dive into the world of QA-LoRA, you'll need to set up your development environment. This is a crucial step because the right setup can make your journey smoother and more efficient. Here's how to go about it:

- Step 1: Install Python: If you haven't already, install Python on your system. Python 3.x is recommended.

- Step 2: Set Up a Virtual Environment: It's always a good idea to work in a virtual environment to avoid dependency conflicts. You can use tools like

venvorcondafor this. - Step 3: Install Required Libraries: You'll need libraries like PyTorch, NumPy, and others. Use pip or conda to install these.

- Step 4: Clone the GitHub Repository: There's a GitHub repository dedicated to QA-LoRA. Clone it to your local machine to get the sample code and other resources.

By following these steps, you'll have a robust development environment ready for implementing QA-LoRA. This setup ensures that you have all the tools and libraries needed to make your implementation as smooth as possible.

Implementing QA-LoRA: A Walkthrough

Now that your environment is set up, let's get down to the actual implementation. This is where the rubber meets the road, and you'll see QA-LoRA in action. Here's a step-by-step guide to implementing QA-LoRA:

-

Step 1: Import Libraries: Start by importing all the necessary libraries. This usually includes PyTorch for the model and NumPy for numerical operations.

import torch import numpy as np -

Step 2: Load Your Model: Load the Large Language Model you wish to fine-tune. This could be a pre-trained model or a model you've trained yourself.

model = torch.load('your_model.pth') -

Step 3: Identify Weight Matrices: Identify the weight matrices in your model that are suitable for low-rank approximation. These are usually the fully connected layers.

-

Step 4: Apply Low-Rank Approximation: Use techniques like Singular Value Decomposition (SVD) to approximate these weight matrices.

u, s, v = torch.svd(weight_matrix) -

Step 5: Replace Original Matrices: Replace the original weight matrices with the low-rank approximations.

approx_matrix = torch.mm(torch.mm(u, torch.diag(s)), v.t()) -

Step 6: Apply Quantization: Finally, apply quantization to further reduce the model's size. This can be done using PyTorch's quantization utilities.

quantized_model = torch.quantization.quantize_dynamic(model)

By following this detailed walkthrough, you'll have a working implementation of QA-LoRA. This will not only make your model more efficient but also retain its performance, giving you the best of both worlds.

The Next Steps in QA-LoRA Research

While QA-LoRA is already a groundbreaking method, it's worth noting that research in this area is far from over. The field is ripe for innovation, and there are several avenues for further improvement. For instance, current research is focused on making QA-LoRA even more efficient without compromising on accuracy. This involves fine-tuning the balance between quantization and low-rank adaptation, among other things.

- Optimizing Quantization: One area of focus is to optimize the quantization process to ensure minimal loss of information.

- Adaptive Low-Rank Approximation: Another avenue is to make the low-rank approximation process adaptive, allowing the model to adjust itself based on the task at hand.

These ongoing research efforts aim to make QA-LoRA even more robust and versatile, ensuring that it remains the go-to method for fine-tuning Large Language Models efficiently.

You can read more about QA-LoRA Paper here (opens in a new tab).

Wrapping It Up: Why QA-LoRA Matters

Final Thoughts on QA-LoRA and Its Impact

As we reach the end of this comprehensive guide, it's important to take a step back and appreciate the transformative power of QA-LoRA. This method is not just another technical jargon thrown into the ever-expanding sea of machine learning algorithms. It's a pivotal advancement that addresses real-world challenges in deploying Large Language Models.

-

Efficiency: One of the most compelling advantages of QA-LoRA is its efficiency. By combining quantization and low-rank adaptation, it significantly reduces the computational and memory requirements of LLMs. This is a boon for developers and organizations looking to deploy these models on a large scale or on devices with limited resources.

-

Accuracy: QA-LoRA doesn't compromise on performance. Despite its efficiency, the method retains the model's accuracy, ensuring that you get high-quality results. This balance between efficiency and accuracy is what sets QA-LoRA apart from other fine-tuning methods.

-

Versatility: The method is versatile and can be applied to various types of Large Language Models. Whether you're working on natural language processing, computer vision, or any other domain, QA-LoRA can be adapted to suit your needs.

-

Ease of Implementation: With readily available code and a supportive community, implementing QA-LoRA is easier than ever. Even if you're not a machine learning expert, the method is accessible and straightforward to apply.

In summary, QA-LoRA is more than just a fine-tuning method; it's a paradigm shift in how we approach the deployment of Large Language Models. It offers a balanced, efficient, and effective way to bring these computational giants closer to practical, real-world applications. If you're in the field of machine learning or are intrigued by the potential of Large Language Models, QA-LoRA is a topic you can't afford to ignore.

Conclusion

The world of Large Language Models is exciting but fraught with challenges, especially when it comes to deployment. QA-LoRA emerges as a beacon of hope, offering a balanced and efficient method for fine-tuning these models. From its technical intricacies to its practical implementation, QA-LoRA stands as a testament to what can be achieved when efficiency and accuracy go hand in hand.

So, as you venture into your next project involving Large Language Models, remember that QA-LoRA is your trusted companion for efficient and effective fine-tuning. Give it a try, and join the revolution that is setting new benchmarks in the world of machine learning.

Want to learn the latest LLM News? Check out the latest LLM leaderboard!